Primary Index

A primary index is an ordered file of records, which means that a primary index and a clustered index cannot exist on a table at the same time. This is because both of these index types physically order the records. More than one primary index or several clustered indexes also cannot exist because there may only be at most one physical ordering field per file.

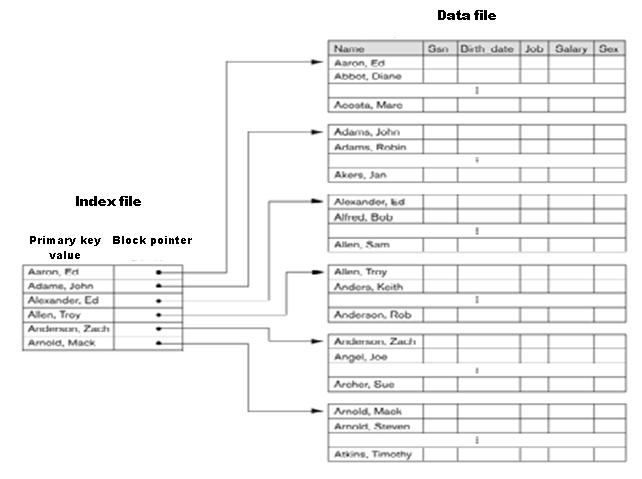

A primary index contains two fields; the primary key value and a disk block pointer. A primary index therefore consists of key-value pairs, just like the HashMap in Java or Dictionary in .NET. The key values in the index are of the same data type as the primary key in the data file and must be unique as these are used to identify records. Thus, if a table’s primary key is of the integer data type, then so will the key values in the primary index file. Each key in the index is mapped to a value, which is in this case a disk block pointer, which contains the address of a given disk block.

There exists one index record for each block in the data file. Each record has the value of the primary key field for the first record in a block as its key and a pointer to that block as its value. A block’s first record may be referred to as an anchor record of a block or a block anchor. This makes a primary index a sparse or non-dense index, meaning that there are only index records for some of the records in the data file; in this case, there are only records for each block in the data file.

A primary index file is substantially smaller than the data file for two reasons; there are fewer index records than there are records in the data file, and secondly, the index records consist of only two fields, whereas data file records potentially contain many fields (depending on the number of columns in the table). As a result, more index records than data file records can fit into a disk block. This means that a binary search on the index file requires fewer disk block accesses than a binary search on the data file. While a binary search on a data file requires log2b block accesses where b is the number of blocks in the data file, finding a record using a primary index requires log2b + 1 block accesses. This is because there is to be performed a binary search on the primary index to find the block pointer, and then a block lookup in the data file.

Problems with primary indexes

The main problem of primary indexes is what happens when inserting, updating or deleting. Since the records are physically ordered in the data file, records often need to be moved around to make room for new records. In the case of deletions, the severity depends on the implementation; closing the gaps in the blocks is often an expensive operation, but using delete markers and periodic reorganization reduces this overhead.

To make inserts more efficient, there are several approaches; empty space that is reserved for new records could be left in each block, but this is only a temporary solution as the original problem returns when this space is used up. For this particular reason, that approach does not seem feasible for use in practice. A more useful solution is to make use of an unordered overflow file. With this approach, new records are simply inserted at the end of the overflow file instead of in the main data file. This overflow file can then be merged with the main data file periodically. The advantage with this technique is that inserting new record becomes very efficient, but the process of finding records takes a hit in return. This is both because the search algorithm becomes more complex and because the overflow file needs to be searched in a linear fashion after the binary search of the primary index (assuming that the record to be found resides in the overflow file). This is, however, only a tradeoff when the most recent information is required; otherwise, the overflow file can be discarded in the search algorithm and the files merged every night, for instance.

Yet another approach is to have a linked list for each block in the data file in which records are inserted into. This is similar to the technique used in hashing to handle collisions where colliding elements are inserted into buckets. As with the previously mentioned overflow file, searching the overflow records involves a linear search, only this time the records to be searched belong to a specific block and not all of the records in the table. This will, on average, increase the efficiency of the search algorithm.