Understanding Analysis in Elasticsearch (Analyzers)

In Elasticsearch, the values for text fields are analyzed when adding or updating documents. So what does it mean that text is analyzed? When indexing a document, its full text fields are run through an analysis process. By full-text fields, I am referring to fields of the type text, and not keyword fields, which are not analyzed. I will get back to the details of what this involves in a moment, but basically it involves tokenizing text into terms, lowercasing text, etc. More generally speaking, the analysis process involves tokenizing and normalizing a block of text. This is done to make the text easier to search. You have full control over the analysis process, because it is possible to control which analyzer is used. The standard analyzer is sufficient in most cases, but I will get back to how to change the analysis process in case you need to do that.

The results of the analysis is actually what is stored within the index that a document is added to. More specifically, the analyzed terms are stored within something called the inverted index, which I will get back to soon. This means that whenever we perform search queries, we are actually searching through the results of the analysis process and not the documents as they were when we added them to the index.

So to recap… When we index a document, Elasticsearch takes the full text fields of the document and runs them through an analysis process. The text fields are tokenized into terms, and the terms are converted to lowercase letters. At least that’s the default behavior. The results of this analysis process are added to something called the inverted index, which is what we run search queries against. More on that in a moment, because first it’s time to take a closer look at the analysis process and how it works in more details.

A Closer Look at Analyzers

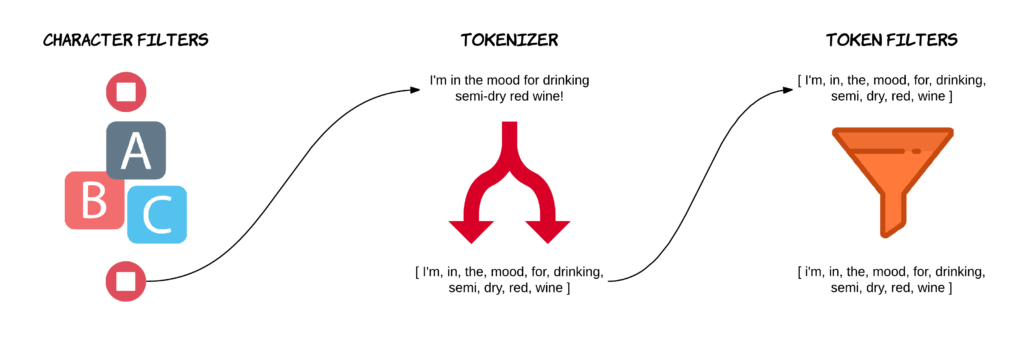

So I just introduced you to how Elasticsearch analyzes documents when they are indexed. Now I want to take a closer look at how that works. The work within the analysis process that I was talking about a moment ago, gets done by a so-called analyzer. An analyzer consists of three things; character filters, token filters, and a tokenizer. An analyzer is basically a package of these building blocks, with each one of them changing the input stream. So when indexing a document, it goes through the following flow.

First, zero or more character filters can be added. A character filter receives a text field’s original text and can then transform the value by adding, removing, or changing characters. An example of this could be to strip any HTML markup.

Afterwards, a tokenizer splits the text into individual tokens, which will usually be words. So if we have a sentence with ten words, we would get an array of ten tokens. An analyzer may only have one tokenizer. By default, a tokenizer named standard is used, which uses a Unicode Text Segmentation algorithm. Without going into details, it basically splits by whitespace and also removes most symbols, such as commas, periods, semicolons, etc. That’s because most symbols are not useful when it comes to searching, as they are intended for being read by humans. It is possible to change the tokenizer, perhaps to a whitespace tokenizer that preserves these symbols, but that is rarely a good idea, as the standard tokenizer is best in most cases.

Besides splitting text into tokens, tokenizers are also responsible for recording the position of the tokens, including the start and end character offsets for the words that the tokens represent. This makes it possible to map the tokens to the original words, something that is used to provide highlighting of matching words. The positions of the tokens is used when performing fuzzy phrase searches and proximity searches.

After splitting the text into tokens, it is run through zero or more token filters. A token filter may add, remove, or change tokens. This is kind of similar to a character filter, but token filters work with a token stream instead of a character stream.

There are a couple of different token filters, with the simplest one being a lowercase token filter which just converts all characters to lowercase. Another token filter that you can make use of, is named stop. It removes common words, which are referred to as stop words. These are words such as “the,” “a,” “and,” “at,” etc. These are words that don’t really provide any value to a field in terms of searchability, because each word gives a document very little significance in terms of relevance. Another token filter that I want to mention, is one named synonym, which is useful for giving similar words the same meaning. For example, the words “nice” and “good” share the same semantics, although they are different words, and the same would be the case for the words “awful” and “terrible.” So by using the synonym token filter, you could match documents containing the word “nice,” even if you are searching for the word “good,” because the meaning is the same, and therefore the document is highly likely to be as relevant as if the query used the other word.

Alright, so now that we covered the different parts of analyzers, let’s take a short moment to walk through an example. When Elasticsearch detects a string field in a document, it configures it as a full text field and applies the standard analyzer. This happens automatically unless you instruct Elasticsearch to do otherwise. The following example is the default behavior with the standard analyzer.

With the standard analyzer, there is no character filters, so the text input goes straight to the tokenizer. The standard analyzer uses a tokenizer named standard, which does what I mentioned earlier; filter out various symbols and split by whitespace.

So the sentence “I’m in the mood for drinking semi-dry red wine!” gets split into tokens with some symbols removed. In particular, the hyphen and exclamation point are removed, but notice how the apostrophe isn’t. This array of tokens is then sent to a chain of token filters. The first one is a token filter named standard. This token filter actually doesn’t do anything. Its only purpose is to act as a placeholder in case some default filtering needs to be added in the future. The stop token filter for stop words is added by default, but it is disabled. This means that the only active token filter that is used with the standard analyzer, is the lowercase filter, which converts all letters to lowercase. In this example, that only affects the first token. And that’s it! That’s the process that all full text fields go through by default.

There is an Analyze API which can be used to test the result of applying character filters, tokenizers, token filters and analyzers as a whole to some text. This is very useful for understanding how the various parts of analyzers work, so let’s take a quick look at that.

Using the Analyze API

We can use the Analyze API to check how specific character filters, tokenizers, token filters, or analyzers handle text inputs. What I want to do, is to use the example sentence from the previous example and go through each step that the standard analyzer performs. The end result will be the same as you saw before, so the point is to show you how to use the Analyze API and how you can use it to debug and get a better understanding of the analysis process for a given piece of text.

Since the standard analyzer doesn’t make use of a character filter, the first step is the tokenizer, namely the standard tokenizer.

POST _analyze

{

"tokenizer": "standard",

"text": "I'm in the mood for drinking semi-dry red wine!"

}The result of this query is what you saw before, but apart from the tokens, you also get some additional information out of the Analyze API, such as character offsets.

{

"tokens": [

{

"token": "I'm",

"start_offset": 0,

"end_offset": 3,

"type": "",

"position": 0

},

{

"token": "in",

"start_offset": 4,

"end_offset": 6,

"type": "",

"position": 1

},

{

"token": "the",

"start_offset": 7,

"end_offset": 10,

"type": "",

"position": 2

},

{

"token": "mood",

"start_offset": 11,

"end_offset": 15,

"type": "",

"position": 3

},

{

"token": "for",

"start_offset": 16,

"end_offset": 19,

"type": "",

"position": 4

},

{

"token": "drinking",

"start_offset": 20,

"end_offset": 28,

"type": "",

"position": 5

},

{

"token": "semi",

"start_offset": 29,

"end_offset": 33,

"type": "",

"position": 6

},

{

"token": "dry",

"start_offset": 34,

"end_offset": 37,

"type": "",

"position": 7

},

{

"token": "red",

"start_offset": 38,

"end_offset": 41,

"type": "",

"position": 8

},

{

"token": "wine",

"start_offset": 42,

"end_offset": 46,

"type": "",

"position": 9

}

]

} Next up, are the token filters. The standard token filters are the standard and lowercase ones, with the first mentioned not doing anything as of today. Therefore we can just use the lowercase token filter to see the same effect.

POST _analyze

{

"filter": [ "lowercase" ],

"text": "I'm in the mood for drinking semi-dry red wine!"

}{

"tokens": [

{

"token": "i'm in the mood for drinking semi-dry red wine!",

"start_offset": 0,

"end_offset": 47,

"type": "word",

"position": 0

}

]

}As you can see, the first word is lowercased, which is the only word that was capitalized. If we wanted to add a character filter, we would add a key named char_filter.

These were the steps that the standard analyzer performs, and the results match what I showed you earlier in this post. If I run a query that just defines the analyzer as the standard one, you will see the same results, because it then performs the steps that you just saw.

POST _analyze

{

"analyzer": "standard",

"text": "I'm in the mood for drinking semi-dry red wine!"

}{

"tokens": [

{

"token": "i'm",

"start_offset": 0,

"end_offset": 3,

"type": "",

"position": 0

},

{

"token": "in",

"start_offset": 4,

"end_offset": 6,

"type": "",

"position": 1

},

{

"token": "the",

"start_offset": 7,

"end_offset": 10,

"type": "",

"position": 2

},

{

"token": "mood",

"start_offset": 11,

"end_offset": 15,

"type": "",

"position": 3

},

{

"token": "for",

"start_offset": 16,

"end_offset": 19,

"type": "",

"position": 4

},

{

"token": "drinking",

"start_offset": 20,

"end_offset": 28,

"type": "",

"position": 5

},

{

"token": "semi",

"start_offset": 29,

"end_offset": 33,

"type": "",

"position": 6

},

{

"token": "dry",

"start_offset": 34,

"end_offset": 37,

"type": "",

"position": 7

},

{

"token": "red",

"start_offset": 38,

"end_offset": 41,

"type": "",

"position": 8

},

{

"token": "wine",

"start_offset": 42,

"end_offset": 46,

"type": "",

"position": 9

}

]

} And that’s how text fields are analyzed when indexing documents. But what actually happens with the results of the analysis process? They are stored in a so-called inverted index. You can learn about the inverted index right here.

Here is what you will learn:

- The architecture of Elasticsearch

- Mappings and analyzers

- Many kinds of search queries (simple and advanced alike)

- Aggregations, stemming, auto-completion, pagination, filters, fuzzy searches, etc.

- ... and much more!

4 comments on »Understanding Analysis in Elasticsearch (Analyzers)«

This is probably the best guide on ES analyzers! Kudos to putting this up. It really helped me a lot :)

Awesome explanation! Keep rocking!

This is one of the best explanations I have found about analyzers. Thank you!

nice blog, but i tried “analyzer”:”synonym” but ende up with error : ‘reason’: ‘Failed to parse mapping: analyzer [synonym] has not been configured in mappings’